Over the past few months, you see it everywhere: teams are no longer building "a chatbot", but workflows with AI. Think of an assistant that summarizes emails, classifies tickets, searches documents, or handles a process step-by-step with tools and integrations.

And that's exactly where the friction begins.

Not because AI can't do it - but because every provider has their own "plug".

The problem: same building blocks, different plugs

Almost all modern LLM platforms now use similar concepts: messages, tool calls, streaming output, multimodal input. Only... everyone describes them slightly differently.

The result: if you build a workflow for provider A, you're back to translating, adapting, testing and fixing edge cases for provider B. And that makes it harder to switch later based on price, performance, or compliance.

What Open Responses tries to do

Open Responses is an open source specification inspired by the OpenAI Responses API. The promise is simple:

Consistent 'agentic' workflows

Streaming, tool invocation and orchestration in a uniform way.

Easier to compare and route

Log, evaluate and choose per use case based on a shared format.

If this catches on, the discussion shifts from "which provider is best?" to: "which provider is best for this task?".

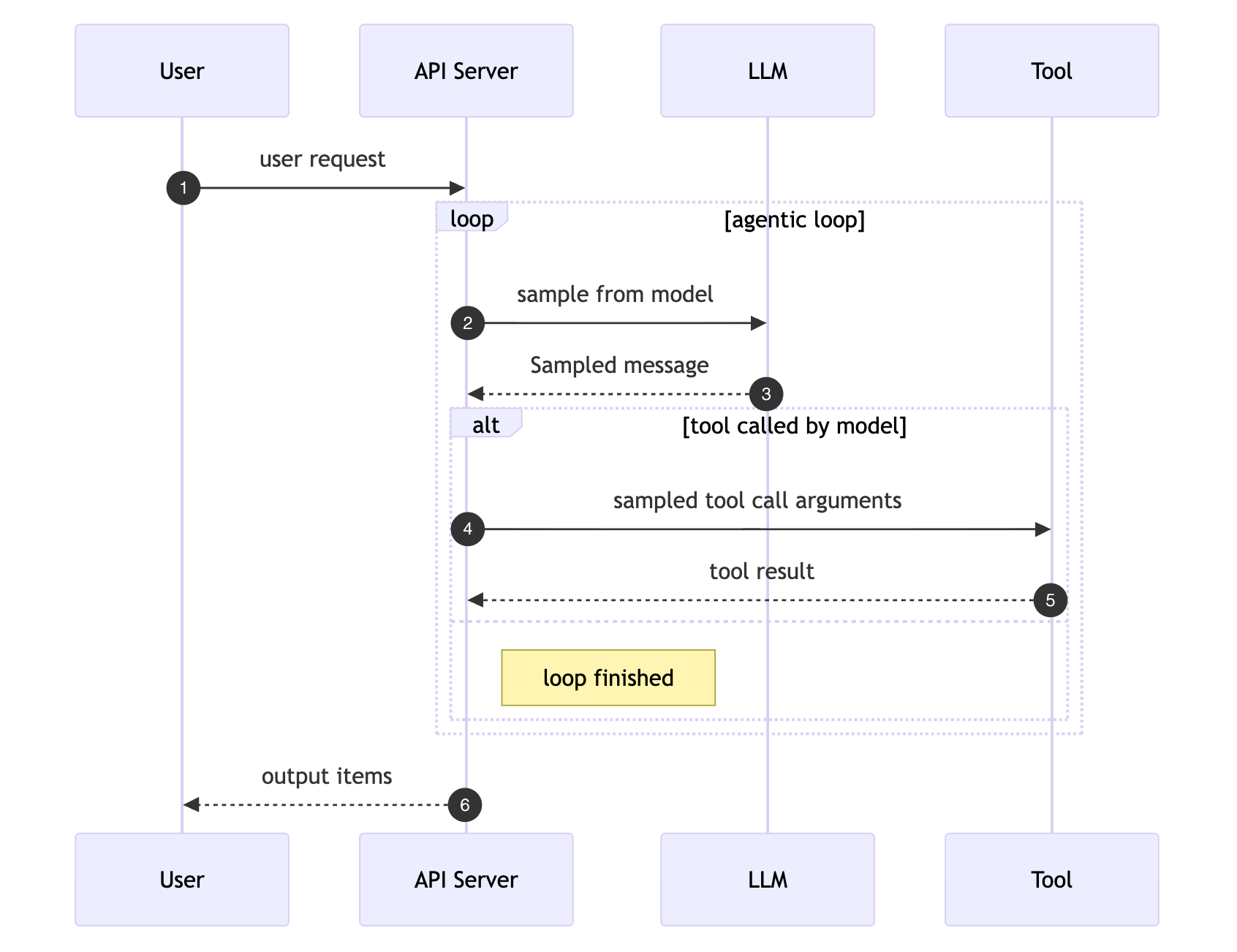

The agentic loop: how it works

Central to Open Responses is the "agentic loop": a standardized way for AI agents to collaborate with tools. The model asks for a tool, gets the result, and decides whether it's done or needs another step.

Source: Open Responses Specification

This loop is exactly what you need for agent-like workflows: the model can independently call tools, process results, and continue working until the task is complete. Open Responses standardizes what that communication looks like, regardless of which model or provider you use.

Community reaction: enthusiastic, with a sharp caveat

Positive reactions come mainly from builders who have long wanted a vendor-neutral standard. Simon Willison even explicitly called this the standardization attempt he had been hoping for for years - precisely because it's a practical, JSON-like interface that many tools can leverage.

And that leveraging is happening immediately. LM Studio and Hugging Face, for example, published support for Open Responses on "launch day", allowing you to access local models through the same type of interface.

Critical caveat

A recurring point: standards often become "lowest common denominator". The criticism you see: if you standardize on the "shape" of one provider, you might miss features that other providers do have, or adopt limitations that aren't necessary.

That criticism isn't unreasonable. But this is also exactly how standards usually start: first remove 80% of the friction, then mature with extensions and variants.

Why this is interesting for organizations (and not just developers)

If you're using AI for real processes, you want to keep your options open. Open Responses is interesting because it's exactly about that:

Less lock-in in pilots

You can design a pilot so that you can switch providers later without demolishing the entire foundation. That's nice when costs rise, policies change, or you need to handle data differently.

Better choices per client and context

One organization wants "best possible output" (cloud), another wants "maximum control" (local). If your interface is the same, you can more easily switch between those worlds.

Comparing and routing becomes practical

Not one holy provider, but smart choosing: fast/cheap models for standard tasks, heavier models for complex cases. And that only becomes really interesting when your tooling supports it well.

Open Responses + MCP: two sides of the same puzzle

A question you often hear: "How does Open Responses relate to MCP (Model Context Protocol)?" The short answer: they complement each other.

Open Responses

Standardizes how you talk to the model. Inputs, outputs, streaming, tool-call requests - all in one uniform format, regardless of provider.

MCP

Standardizes how the model talks to the outside world. Tool execution, context passing, and returning results - independent of which model you use.

Together: end-to-end portability

An application using Open Responses can uniformly invoke models from any provider and consistently parse tool-call requests in the model's streamed output.

When the model requests a tool call, an MCP-compliant orchestration layer can handle the execution, context passing, and result injection - independent of the underlying model provider.

The result: write your agent logic once, swap models via Open Responses, and swap or secure tool integrations via MCP. Without vendor-specific hacks.

Why this is relevant for Think Ahead

At Think Ahead, we build AI pilots and agent-like workflows that quickly move to a working demo - with real data and real processes. In that world, "portability" isn't a hype word, it's just: risk reduction.

Open Responses is primarily a signal to me: the market is moving towards a layer where you no longer build "OpenAI apps" or "Anthropic apps", but workflow apps with interchangeable models.

Will this become the standard? Too early to say.

But that there's a need for a "USB-C for AI agents" - that's crystal clear.

Want to build AI workflows that aren't locked to one provider?

We're happy to help think through architecture, tool choice, and vendor-independent implementations.